Who doesn’t love Excel?! Since its first launch in 1985, it’s become the de-facto tool that replaces your calculator with tables that do the number-crunching automatically.

Today, millions of people use spreadsheets for list building, data analysis, and even task management. BUT Excel becomes everyone’s nightmare the moment you need to add new data to a spreadsheet. 🔪

Building a spreadsheet manually is awful and requires you to glue your hands to Control + C and Control + V shortcuts. Plus switching from tab to tab for every field you want to copy.

But you are ahead of the pack. You’ve discovered that there are data extraction tools (aka scrapers) that can help you get data from websites to spreadsheets with just a few clicks. And zero copy-pasting.

This article will teach you how to copy data from websites to spreadsheets without copy-pasting. We will take a look at the best scraper tools and then go through a step-by-step tutorial to set it up for your unique use case. Let’s dive in!

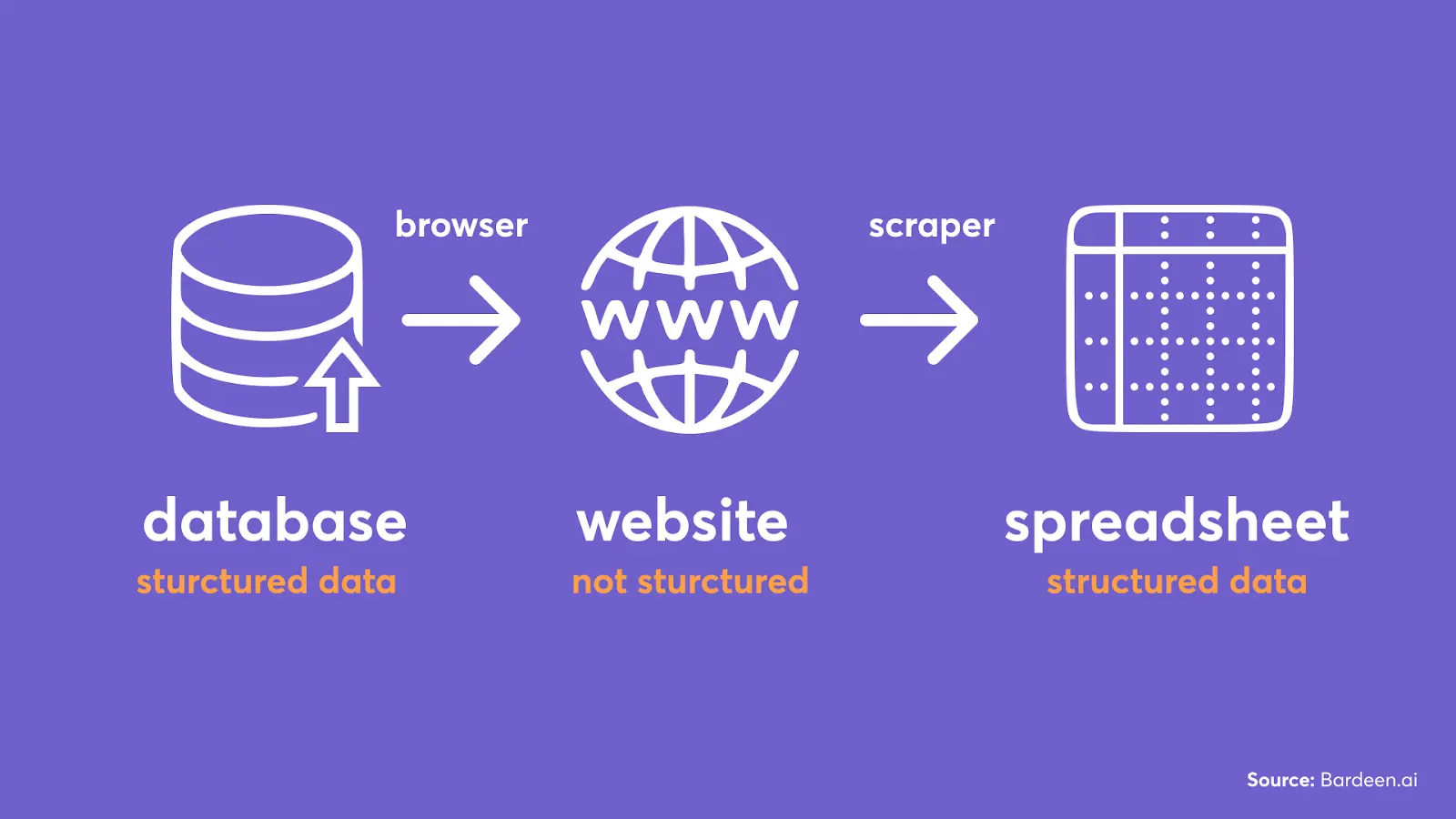

Before we get into the nitty-gritty of the web scraping tools and how to use them, here are the basics of how data extraction works.

In a nutshell, web scrapers take data from specific places on a website to create structured data, like a spreadsheet.

Imagine you select all text on a website and try to copy-paste it to Excel. You’d just end up with one cell with a bunch of text in it. Instead, scrapers get structured information from specific places on a web page so that the name of a lead, for example, gets pasted in the appropriate column in your spreadsheet.

The original data lives in companies’ databases and is structured. When you go on a website, this data gets pulled from that database and is rendered by the browser stripping all of the data structure.

A web scraper tool will extract data from different places on a web page and put each item into the appropriate column. Scraper templates specify what data to extract from a particular website.

Update 2024: AI Scrapers are the new rage to extract data. We cover 8 of the top products in this category here.

There are two types of scraper templates:

That’s all you need to know about scrapers for the majority of extraction jobs. 🪖 To learn more about no-code web scrapers, check out this article.

It’s time to look into three of the best apps for extracting data from websites to Excel. Here’s a quick comparison table:

| Tool | Chrome App | Operating Procedure | Cost Per Month |

|---|---|---|---|

| Bardeen | Yes | In-Browser | Free |

| Instant Data Scraper | Yes | In-Browser | Free |

| Octoparse | Yes | Cloud | $58-$167 (Free Trial Available) |

Let’s talk about all three of these in greater depth, focusing on cost, user-friendliness, and overall versatility.

Bardeen is a free workflow automation tool with a built-in scraper. It has dozens of ready-to-use scraper templates for websites like LinkedIn, Facebook, and other websites. So you can start scraping in just a few minutes without any technical setup right from your browser.

Bardeen.ai has built 100+ ready to use scraper models.

In Bardeen, you can build custom scraper templates visually by clicking on the elements you want to extract.

One of the main benefits is that it works in the browser. This means you can get data from websites that require logins to view information.

Try these pre-built automations:

And here is a game-changer feature that makes Bardeen #1 on this list. Bardeen integrates with other tools like Google Sheets, Notion, Airtable, and data-enrichment apps.

You can also get notified by email when something changes on the website.

In the next section, we’ll go through the set up step-by-step!

Bardeen is powerful in getting accurate information from single pages and lists. But it may take a few minutes to set up for each website that you want to scrape.

Instant Data Scraper can scrape lists with one click without building scraper templates. It’s also a Chrome extension that automatically finds a list on the currently opened page. Here is how it looks:

Instant Data Scraper predicts the list you’d like to scrape and automatically generates a CSV or XLSX file for you.

If the tools didn’t find the correct list, you could click on “try another table.” If you got no luck there, you could try to configure which fields you want to extract manually. But this will require understanding a little bit of CSS and HTML.

Another disadvantage of Instant Data Scraper is that it can't extract data from individual pages. For example, you can’t scrape LinkedIn profiles, etc.

Octoparse is another web scraper app that can scrape both lists and single pages. The downsides are the cost and the time-consuming setup process.

Despite a bit of complexity beneath the surface, the basic scraping process is identical to almost every other tool. You enter a website URL, select data to extract, and run the extraction.

Octoparse is cloud-based which is faster compared to scraping in the browser. There are also advanced featured like IP rotation, and scheduled scraping. Octoparse also offers a paid service to extract data for you.

Overall, Octoparse is a decent option if you need a more heavyweight solution for your web scraping data to Excel.

It’s time to put what we’ve learned into action!

In this tutorial, we will scrape job posts from a website called ProBlogger using Bardeen.

If you want to leverage 100+ pre-built scraper templates, scroll down to the next section. Otherwise, stay here to learn how to build custom scrapers and integrate additional apps into your workflow.

💡️Reminder: scraper templates specify what data you want to extract from a page.

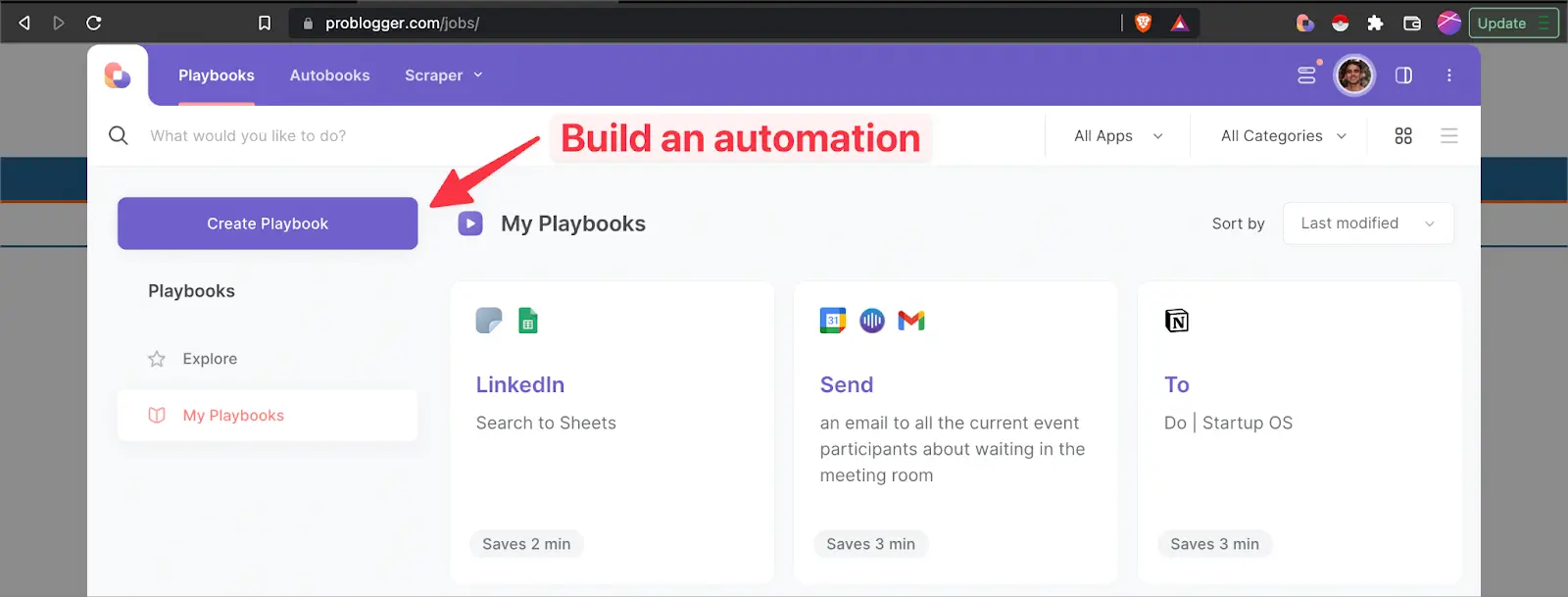

Go to the website that you want to scrape (like ProBlogger), click on the Bardeen extension icon, and then on “New Playbook.”

Inside the builder, click on the scrape action and find “scrape data on the active tab.”

Then click on “create new scraper template” and specify a website for this template.

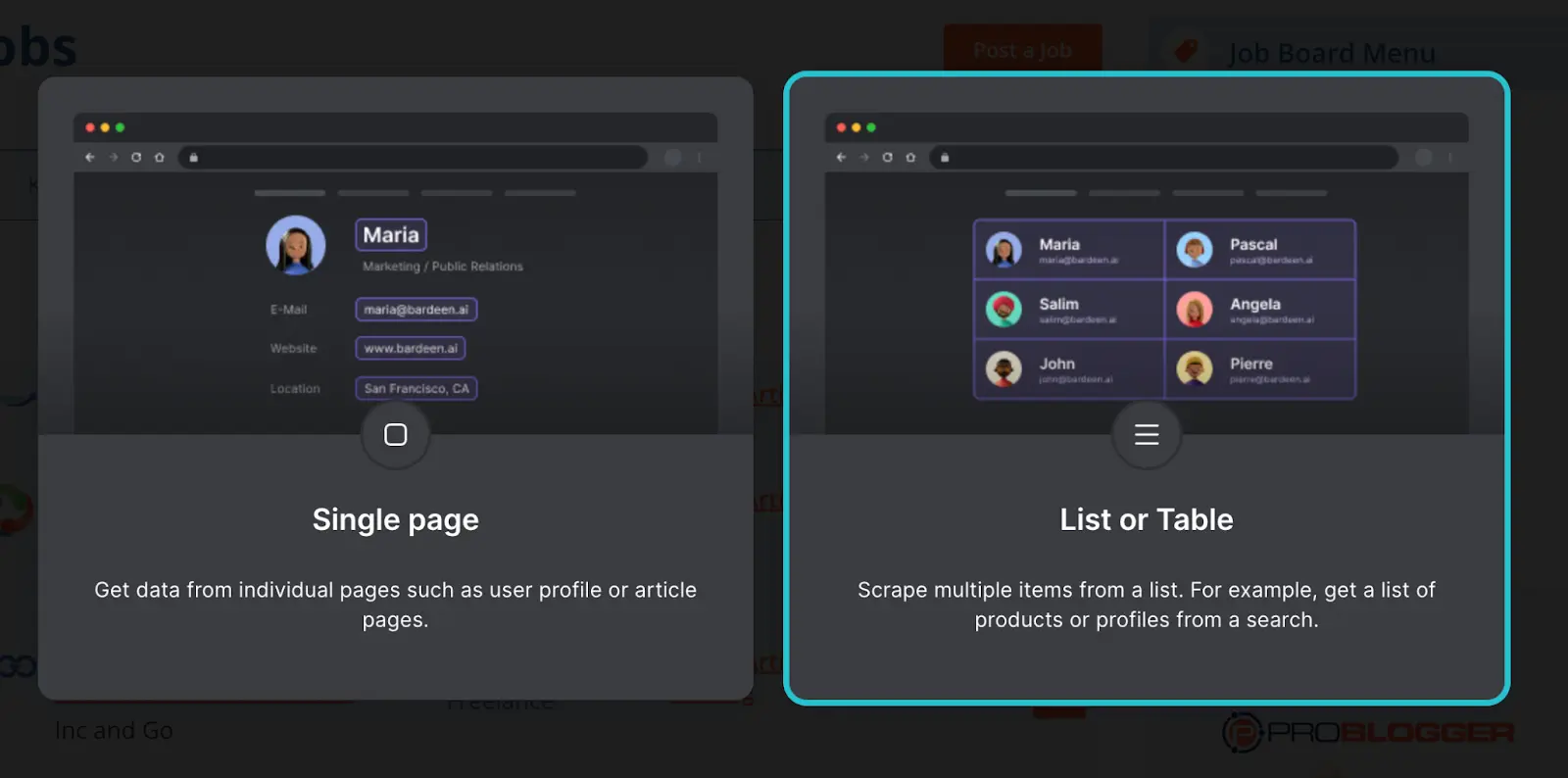

Now, you’ll have to choose between “Single page” or “List or Table.”

In our example of ProBlogger jobs, we have a list rather than an individual page.

Build an automation to scrape the web.

Next, name your scraper template and click on “Start Building!”

Here we need to tell our too which list we want to extract.

To do this, click the same element in two list different items.

Here, we selected the first two items on the jobs list. As highlighted in the screenshot, we clicked on the same data element (“job title”), but in two different list items.

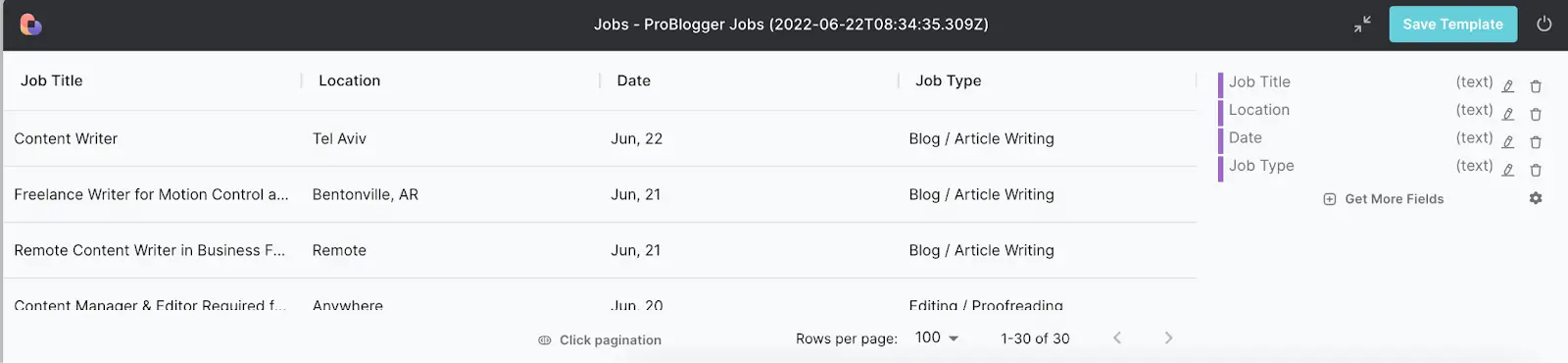

Here comes the fun part: picking elements that you want to extract from the website into your spreadsheet.

You can choose between six types of data: text, link, image, click, input, and attribute.

We’ve configured four data fields and named them Job Title, Job Type, Location, and Date. You can see this data in the table preview.

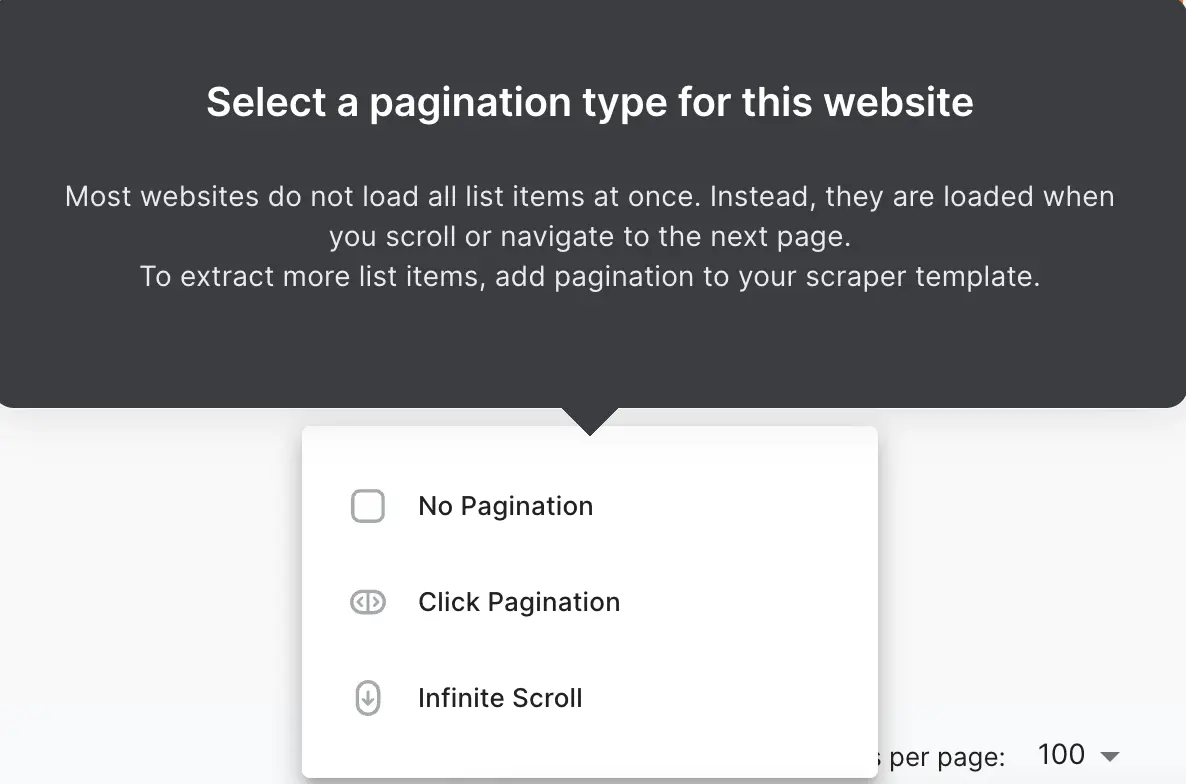

Although there are some exceptions, most websites (including ProBlogger) don’t load all the list items in an infinite list. Instead, they have pages.

To extract the ENTIRE list, we need to visit each page on that list. The good part is that it can be done automatically.

Pick click pagination to tell Bardeen where the “next page” button is located.

In our example, there are three pages and the “>” icon that takes us to the next page. Click on it.

Save the template!

When you save your scraper template, you will be taken back to the builder.

You can further customize the scrape action and pick the number of elements you will want to extract or leave it blank to always get the entire list.

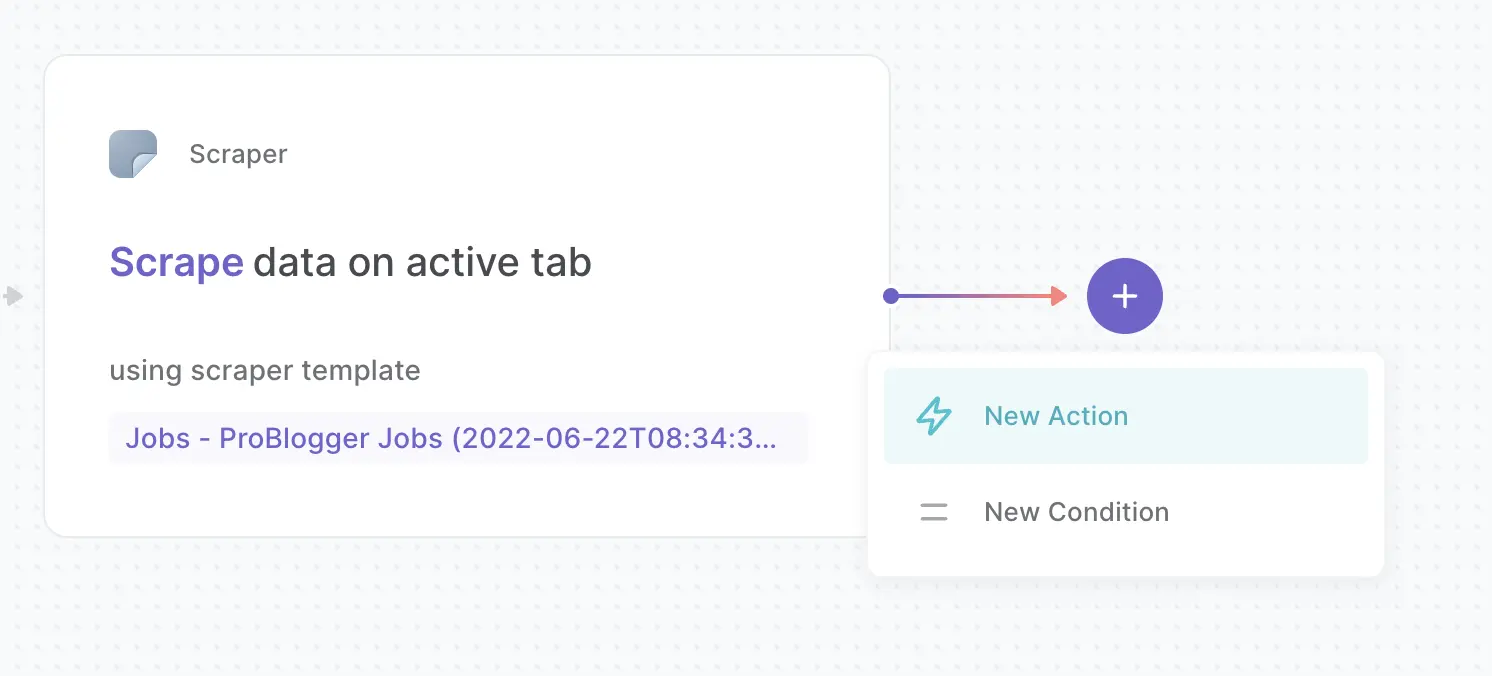

The builder is where you can customize your automation and add data to Sheets / Airtable and other apps.

Let’s add the Google Sheets action.

Add the integration and pick the action called “add rows to Sheet.”

Finally, map the data from the previous action to this action by selecting “Action 1.”

Save the automation, and name it.

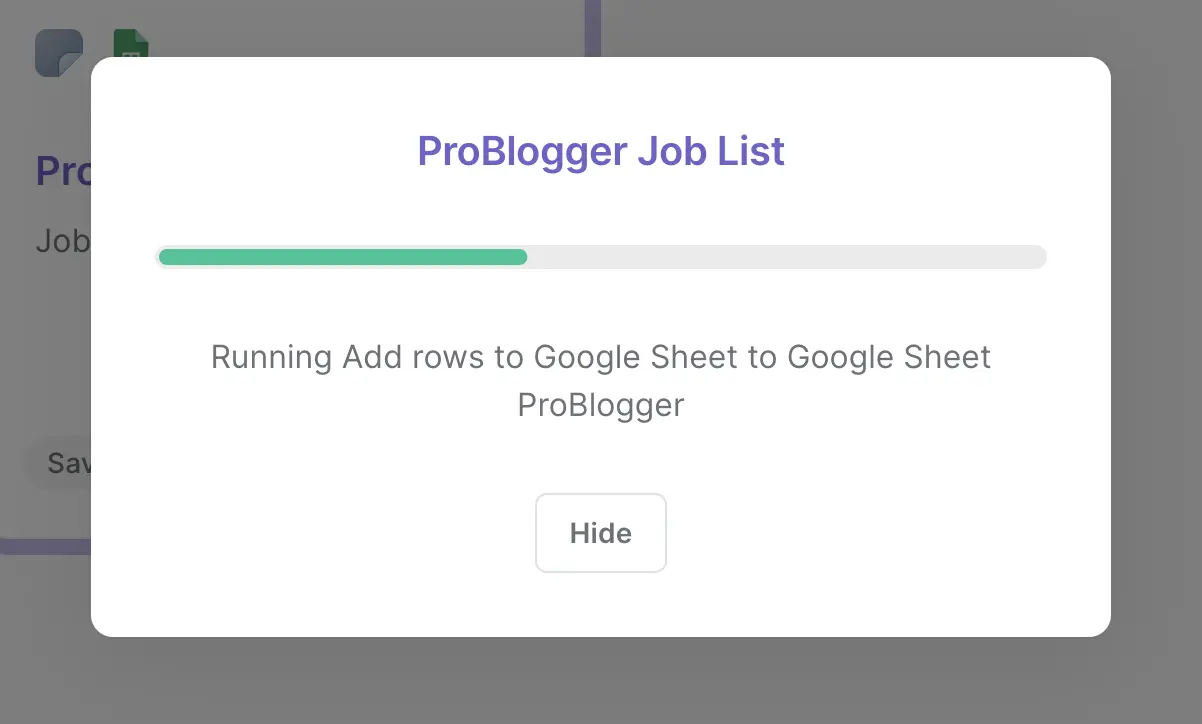

Now, you can run the automation with one click by clicking on a card. The scraping process will begin.

When done, a new window will pop up where you can view the results.

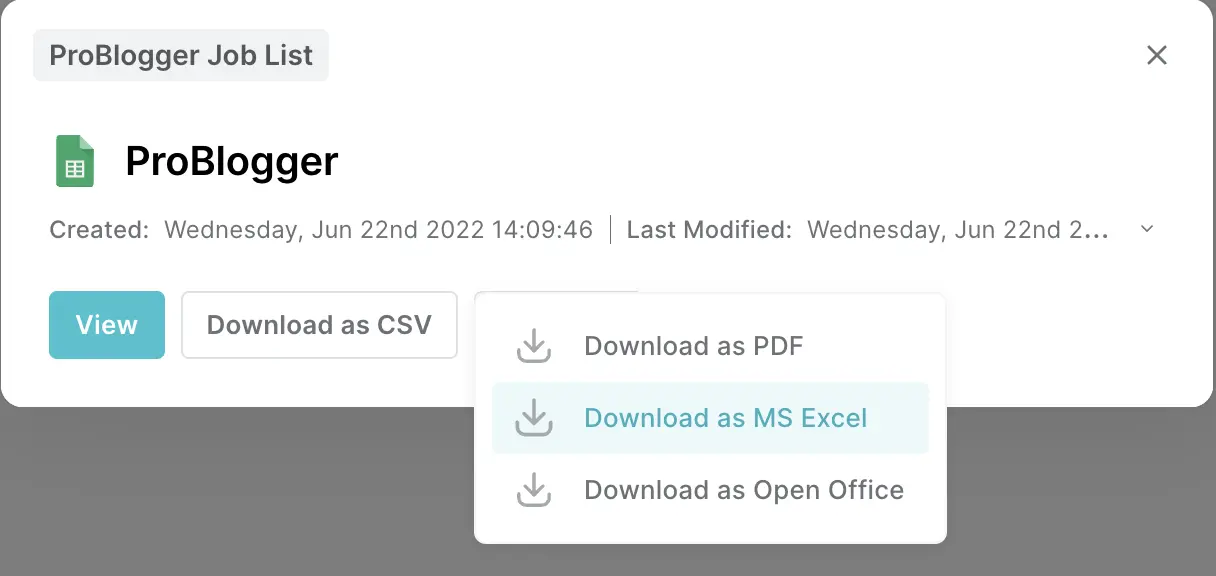

You can open the spreadsheet directly in Google Sheets or download it as a CSV, PDF, Excel, or Open Office file.

Pretty neat, right?

Here is how the end product looks in Google Sheets:

Of course, the scraper template will vary from website to website, but you only need to build a scraper template for a given website once.

For a deeper dive into no-code web scraper, and this web data scraping tutorial.

Here are the most popular pre-built scraper automation that you can use right away.

Save LinkedIn profile to Sheets: Build a leads list with a few clicks. Then you can enrich this data and leverage it for your outreach campaigns.

Copy TechCrunch articles for a keyword to Google Sheets: Want to stay on top of TechCrunch articles relevant to your company or industry in a Google Sheet? This Playbook has got you covered!

Copy top Product Hunt posts to Google sheets: For those interested in being up to date with the latest Product Hunt posts, this Playbook will copy trending posts to a Google Sheet.

Get Github user profile data: In case you want to scrape profile data from a Github user profile, maybe for potential candidates or any other use-case, this playbook is perfect.

Copy current browser tab to Google Sheets: You know that feeling when you have a bunch of tabs opened but need to close a few to lower the load on your computer? This playbook will save the current browser tab to Google Sheets so you can open it later!

Besides this, you can use data extraction tools for growth hacking like when scraping Facebook business pages and scraping data to Notion.

Congrats on learning how to extract data to excel. Here are some additional questions you might have and how to handle them.

Yes! With Bardeen, you can view the extracted data in Google Sheets or download it as a CSV, PDF, Excel, or Open Office file. Instant Data Scraper works with CSV or XLSX file formats. Octoparse also offers a wide range of options.

This will depend on the website and its anti-scraper detection tools.

Generally, when using a web scraper, the chances of getting blocked are high if you use it at scale.

Companies find the idea of automated bots crawling through their websites kind of scary, and they do everything in their power to keep those bots at bay.

Fortunately, most websites are pretty safe to scrape. If you use Bardeen or Instant Data Scraper, it’s even safer because they work in your browser and use your clean IP.

Now you know about how awesome web scrapers are because they can save you countless hours from copy-pasting data. You’ve learned about the three of the best data extraction tools and learned how to leverage them for different use cases.

So, what are you waiting for? Go ahead and try one out yourself.

There might be a slight learning curve at first, but once you invest 10-15 minutes in learning data scraping, it will become your superpower and a massive competitive advantage.

Good luck!

SOC 2 Type II, GDPR and CASA Tier 2 and 3 certified — so you can automate with confidence at any scale.

Bardeen is an automation and workflow platform designed to help GTM teams eliminate manual tasks and streamline processes. It connects and integrates with your favorite tools, enabling you to automate repetitive workflows, manage data across systems, and enhance collaboration.

Bardeen acts as a bridge to enhance and automate workflows. It can reduce your reliance on tools focused on data entry and CRM updating, lead generation and outreach, reporting and analytics, and communication and follow-ups.

Bardeen is ideal for GTM teams across various roles including Sales (SDRs, AEs), Customer Success (CSMs), Revenue Operations, Sales Engineering, and Sales Leadership.

Bardeen integrates broadly with CRMs, communication platforms, lead generation tools, project and task management tools, and customer success tools. These integrations connect workflows and ensure data flows smoothly across systems.

Bardeen supports a wide variety of use cases across different teams, such as:

Sales: Automating lead discovery, enrichment and outreach sequences. Tracking account activity and nurturing target accounts.

Customer Success: Preparing for customer meetings, analyzing engagement metrics, and managing renewals.

Revenue Operations: Monitoring lead status, ensuring data accuracy, and generating detailed activity summaries.

Sales Leadership: Creating competitive analysis reports, monitoring pipeline health, and generating daily/weekly team performance summaries.